Deepseek's Origins: From High-Frequency Trading to Frontier AI

The emergence of DeepSeek as a primary force in global AI represents more than the rise of another competitor in the large language model sector. It signifies, rather, a fundamental shift in the economics and engineering philosophy of the field. While the Silicon Valley narrative of artificial intelligence often centres on venture capital-backed startups and massive "compute moats," DeepSeek’s origin story is rooted in the high-stakes, data-driven world of quantitative finance.

The company was incubated within Ningbo High-Flyer Quantitative Investment Management, a premier Chinese hedge fund founded by Liang Wenfeng. A graduate of Zhejiang University, Liang spent the years following the 2008 financial crisis perfecting the integration of machine learning into quantitative trading. By 2019, High-Flyer had accumulated over 10 billion yuan in assets, providing the liquidity necessary to build its own massive computing infrastructure. In 2020, the firm established "Fire-Flyer I," a supercomputer dedicated to deep learning that served as the experimental bedrock for what would eventually become DeepSeek.

Unlike its American rivals, which often operate under the immediate pressure of commercialization and venture returns, DeepSeek was founded in early 2023 as a research-intensive laboratory funded by High-Flyer’s internal R&D budget. This institutional genesis allowed the lab to prioritize long-term research goals and algorithmic efficiency over market optics. The culture was described by insiders as "geeky" and "quirky," favoring specialized engineering talent over the sheer workforce scale seen at major tech conglomerates. This focus on "smart" learning rather than brute-force scaling would eventually allow the lab to challenge the established dominance of compute-intensive American firms.

The DeepSeek Shock: Efficiency as a Weapon

In late 2024 and early 2025, the release of DeepSeek’s V3 and R1 models sent profound shockwaves through the global technology and financial sectors, an event now frequently referred to as the "DeepSeek Moment." The market reaction was visceral: on the release of the R1 reasoning model, NVIDIA’s market capitalization experienced a single-day drop of over $600 billion, the largest in U.S. market history. This panic was driven by a realization that DeepSeek had shattered a core assumption of the AI age: that frontier performance required ever-skyrocketing amounts of energy and the latest high-end hardware.

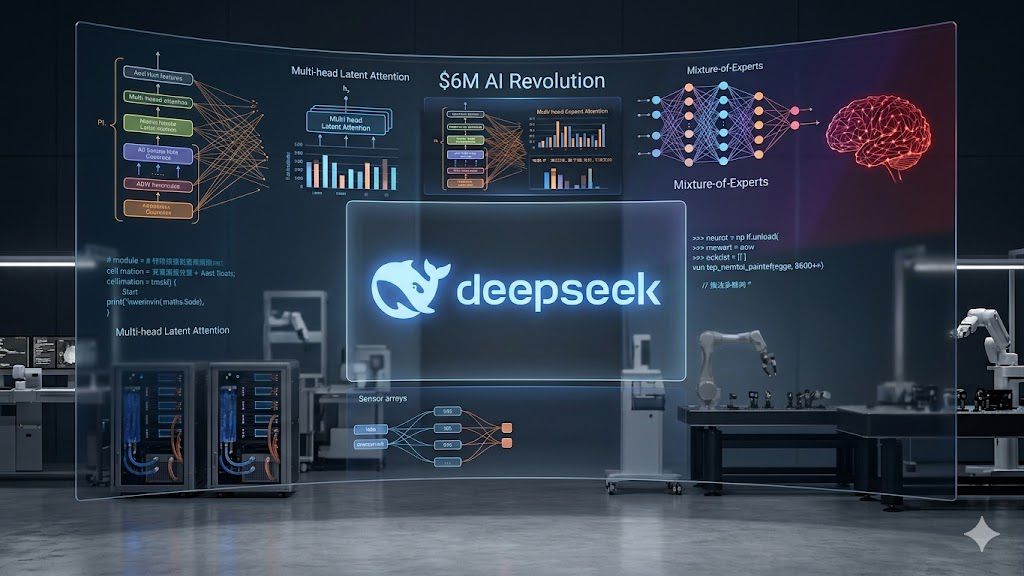

DeepSeek achieved frontier-level capabilities at a fraction of the cost of its peers. While training a model like GPT-4 is estimated to cost well over $100 million, DeepSeek-V3 was trained for just under $6 million. This was not a result of cutting corners, but of architectural elegance. The lab introduced innovations like Multi-head Latent Attention (MLA), which reduced the memory overhead of the model’s "context" by nearly 90%, and a refined Mixture-of-Experts (MoE) framework. In this configuration, only a small fraction of the model’s parameters—roughly 37 billion out of 671 billion—are activated for any given task, making the model computationally "light" during use.

Furthermore, the R1 model demonstrated that "reasoning" (the ability to self-correct and think through complex problems) could be achieved through large-scale reinforcement learning rather than just expanding dataset size. By proving that elite-level AI could be built cheaply and run on commodity hardware, DeepSeek democratized access to top-tier intelligence, effectively ending the era where "compute" was a definitive barrier to entry.

The 2026 Frontier: Autonomy and Agentic Ambition

As of April 2026, the focus has shifted toward the forthcoming DeepSeek-V4, a model rumored to reach the trillion-parameter scale. The anticipation surrounding V4 is not just about its size, but about its strategic significance. Reports indicate that DeepSeek has pivoted its training infrastructure away from Western hardware toward domestic Chinese chips, such as the Huawei Ascend series. By optimizing for these domestic processors, DeepSeek is effectively building a "fully autonomous" AI stack that is resilient to U.S. export controls, signaling a new phase in the geopolitical tech race.

The forthcoming version also promises to move beyond simple text generation into "agentic" AI—models designed to perform complex, multi-step tasks in interactive environments. The architecture is expected to feature "Engram Conditional Memory," a mechanism that allows the model to selectively retain and recall information based on specific task contexts, significantly improving accuracy in massive data environments. This shift toward "Thinking in Tool-Use" allows the AI to act as an autonomous agent, identifying goals and correcting its own path if it encounters inconsistencies.

Ultimately, the significance of DeepSeek extends beyond the U.S.-China rivalry; it represents a "pluralization" of AI development. By proving that strategic ingenuity can be as valuable as raw computational power, DeepSeek has challenged the narrative of a closed AI ecosystem. As the company moves toward the multimodal and agentic frontiers of the V4 series, it remains a disruptive benchmark, forcing the entire industry to prioritize architectural innovation over the simple logic of brute force.

Syndicated by The China Technology Review.

Human

Human